Collaborative and interactive work for attendees’ participation, mobile ensemble, WI-FI network, and algorithmic score generation system in real-time.

The main idea of the work is how we can reappropriate new technologies to implode the usual roles that occur on stage (public, performers, creator).

This work attempts to dissolve the scenic identities of the event attendees.

The attendants will interact with a score creation system through an application installed on his/her own mobile. The information generated by the interaction of the public-interpreter is sent via WI-FI to the score generator. The score generation system will send to the public-interpreter the musical notes to be sung. He/she can choose whether the received notes are sung by him/her or by the mobile device itself. The sounding result is the mix of the sounds made by the mobiles and/or the notes sung by the public-interpreter.

Who is the creator of the work? the composer, the public, the MacBook Pro, the algorithm?

The first staging was on June 3 in the cycle “feel, create, transform” at the local of the CNT-AIT.

Video of the first staging of Public Music.

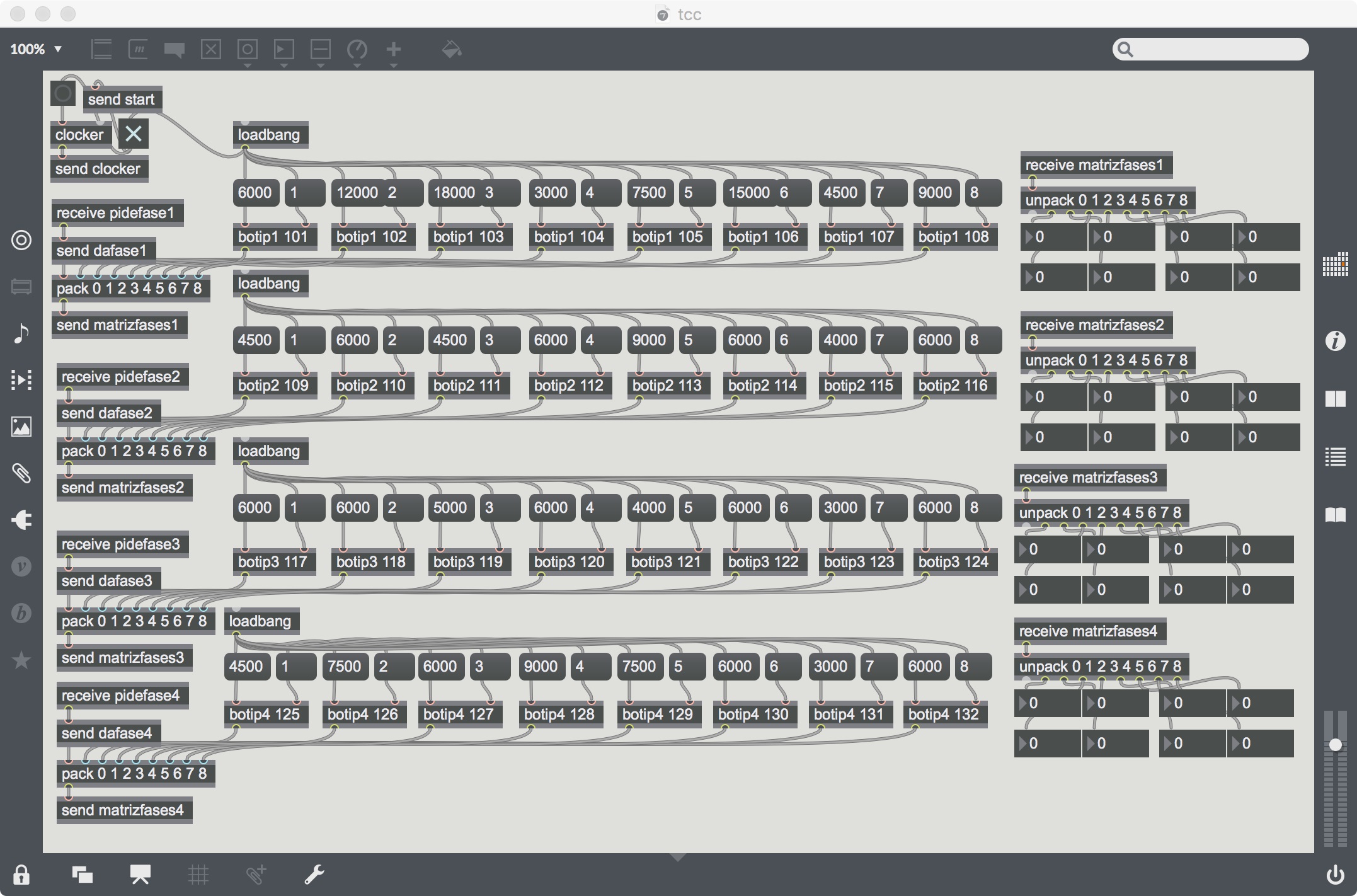

Algorithmic score generation system in real-time and the server of the event.

Installing the mobile application before the event.

Main Max Window. You can see each one of the bots associated with an IP address of a mobile phone.

References

[1] Egido, Fernando. Artificial Computational Creativity based on Collaborative Intelligence (2020). Lectured at the Artificial Intelligence Music Creativity 2021 in Graz.

Similar works

Horror Vacui (2017)

Collaboration as an Act of Creativity (2022)

When the Self of Us Becomes Ours(2019)

The Human in the Loop (2025)

Public stage (2023)