Humanity as a Sensory System is a work for Two Voices and ensemble, live notation, live generative system, live electronics, and attendees’ participation. Humanity as a Sensory System is a Collaborative and interactive work in which the work is real-time created by the evaluation of the work. Attendees will evaluate the work through a web app, and the musical generative system will change in real-time according to the evaluations. The Musicians will receive the notes via a live notation system on their mobile phones. This work belongs to a series of works in which the composer creates a self-referential musical generative system based on the real-time evaluation of the work. The main musical material of this work is its evaluation. The work duration is about 8 minutes. The title of the work refers to the embodiment that the participation in this event creates between the attendees when they collaborate to create something.

This work is a creative hybrid networked framework that integrates the musical generative system and its evaluation. The attendees, the generative system, and the musicians integrate into a hybrid networked creative system. This work is at the same time a musical and a social experiment. The data from the evaluations of the attendees will be recorded, processed, and shared as Open Data.

Humanity as a Sensory System as an aesthetic-social experiment

From the perspective of a social-aesthetic experiment, this work researches three different aspects. First, we want to know if the same cooperative patterns are repeating in the various realizations of the work, or if, in each realization, the evaluation of the attendees is completely different.

Second, we want to know if participation in the evaluation itself generates a new way of listening and engagement in the listening of the work[2]. Do we listen in the same way when we are creating the work by changing it by its very own evaluation? How does participation in the real-time assessment of the work influence listening to the work?

Third, is there something like a collective listening embodiment? Can we say that the attendees work as a collective embodied sensory system, or do they act individually?

The attendees will fill out a survey after the performance

Description of the project

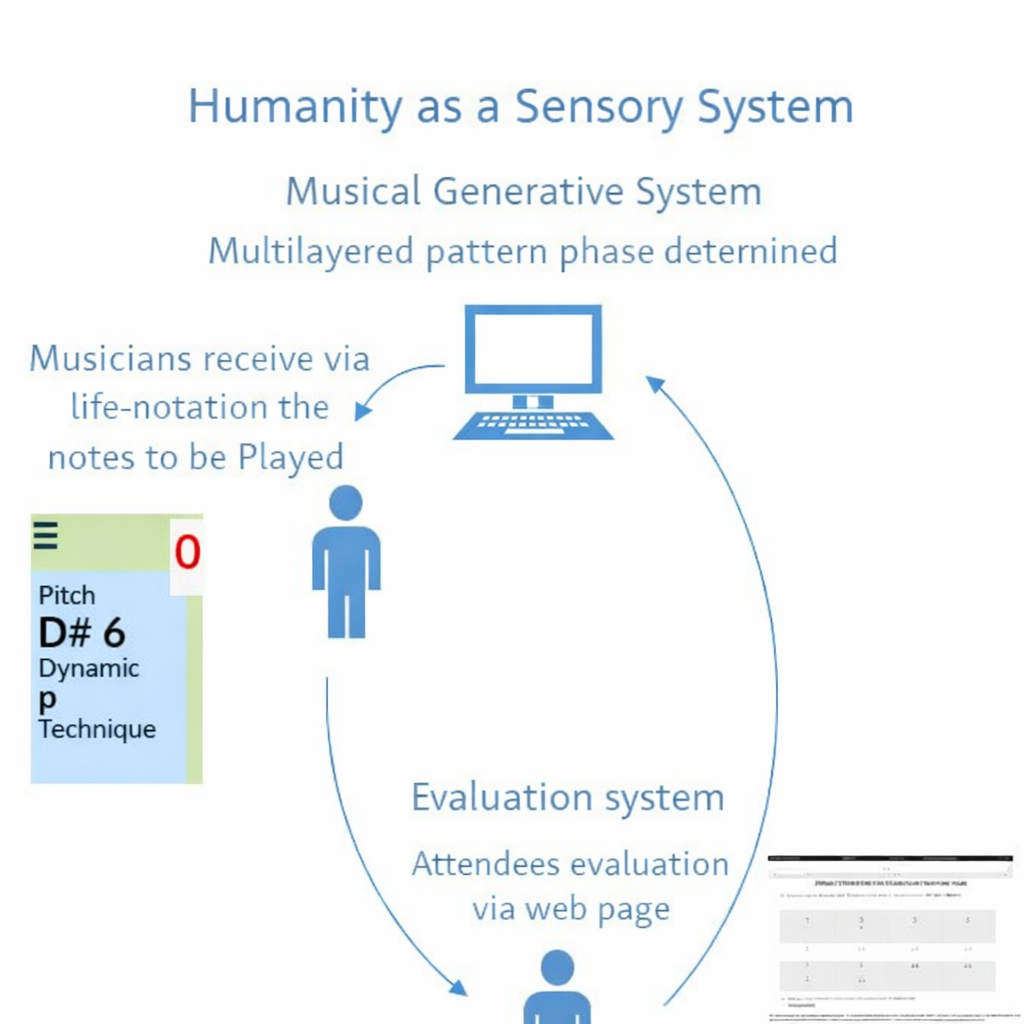

The form and narrative of the work is the history of its evaluation. The formal development of the materials is not given a priori; it depends on the real-time evaluation of the attendees. The work is conceived as a loop between the generative system and the attendees.

Fig. 1. Circular self-referential generative system scheme.

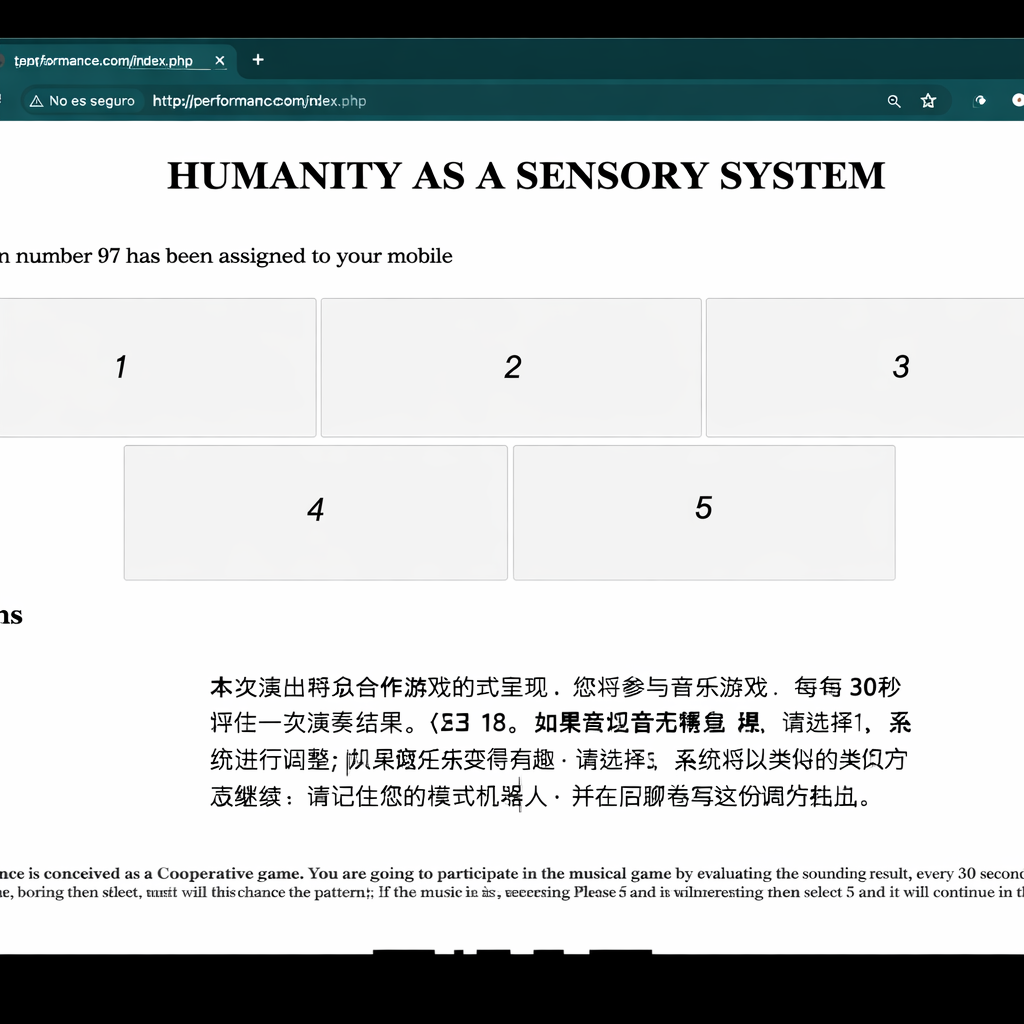

This Work is a circular self-referential musical cooperative game. The attendees connect to a Wi-Fi network and then to a web server. They will interact with the system through a web page where they will evaluate the sounding result every 30 seconds. The generative system will react to the evaluations. If the evaluations are positive, the system will not change. If the evaluations are negative, the system will change, and the sounding result will change.

Fig.2. This is the web application where evaluations are made.

The generative system of the work consists of a series of pattern bots (each one implemented as an autonomous bot) that repeat at different times. Each time a pattern begins its cycle, it measures its relative position (phase) regarding the other patterns and determines the values of the parameters of the events belonging to this pattern.

Every 30 seconds, the attendees evaluate the evolution of the work; they will select 5 if they like it or 1 if they do not like it. This evaluation will change the velocity or repetition of their associated pattern-bot. This change will create a change in the work.

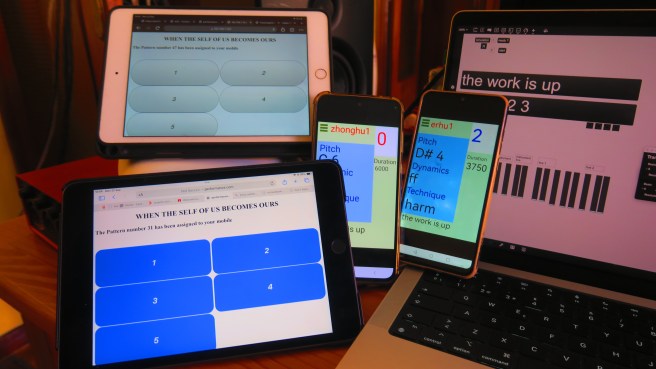

Some of the pattern-bots will control the instruments by sending them via a real-time notation system the notes to be played by the musicians via a real-time notation system.

Other Agents will control the live electronic synthesis motor generation of the electronic sounds.

Fig. 3. This is the application used by the interpreters to receive the notes to be played. The musician will receive the exact note to be played.

iPad connected to the web server, Android mobile devices receive notes via live notation, the laptop with the Max patch, and the virtual machine with the Ubuntu Web Server.

References

- [1] Egido, Fernando. Artificial Computational Creativity based on Collaborative Intelligence (2020). Lectured in the Artificial Intelligence Music Creativity 2021, Graz.

- [2] Egido, Fernando. Taxonomy of listening tension based on cognitive dissonance (2024). Lectured at the Bled Contemporary Musical week 2025.

- [3]Egido, Fernando. Towards an Aesthetics of Cognitive-Parametric Music.

Similar works

- Horror Vacui (2017)

- Collaboration as an Act of Creativity (2022)

- Public stage ( 2023)

- The Human in the Loop (2025)

- When the Self of Us Becomes Ours (2025)